Deepfakes, Helpdesk Fraud, and Account Takeover: The Identity Crisis redefining Enterprise Security in 2026

The attack that brought MGM Resorts International to its knees didn’t start with a zero-day exploit or a sophisticated malware payload. It started with a phone call.

A member of the threat group Scattered Spider identified an employee on LinkedIn, called MGM’s IT helpdesk, and convinced a support agent to reset that employee’s credentials. That single interaction gave the attacker super administrator privileges. Within days, slot machines went dark across Las Vegas and MGM was facing $100 million in losses. Caesars Entertainment, hit by the same group days earlier, quietly paid a $15 million ransom.

Neither breach involved a technical exploit; both succeeded through a convincing conversation and a helpdesk agent who had no objective way to verify the caller’s identity.

That is the identity problem facing every organization with a workforce, a helpdesk, and a cloud-based identity provider in 2026.

The Attack Kill Chain: From LinkedIn to Lockout

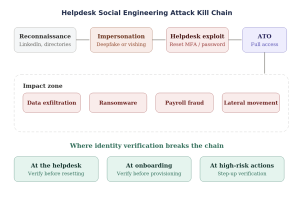

MGM was not an outlier. The same sequence appears across industries with enough regularity to form a recognizable kill chain:

- An attacker identifies a high-privilege employee on LinkedIn

- They impersonate that employee using social engineering or deepfake voice technology

- They contact the IT helpdesk requesting a password reset or MFA deactivation

- They take over the account

- At this point, their options are data exfiltration, ransomware deployment, or payroll redirection

The diagram below illustrates this kill chain and highlights the critical intervention point where identity verification breaks it:

Figure 1: Helpdesk social engineering attack kill chain and identity verification defense points

Key takeaway: The intervention point that breaks this kill chain is identity verification at the helpdesk. If the caller’s identity is cryptographically confirmed before any credentials are reset, the attacker’s impersonation is blocked regardless of how convincing it may be.

The Threat Landscape: By the Numbers

Deepfakes, helpdesk fraud, and account takeover (ATO) are mainstream attack vectors. The numbers from 2025 reflect how operationally mature these tactics have become:

- 62%1 of organizations were victims of deepfake-related attacks in 2025.

- Over 5,1002 account takeover cases were reported to the FBI, with losses exceeding $262 million.

- Deepfake content is projected to account for 40% of all biometric fraud attempts, with US losses alone reaching $547 million3 in the first half of 2025.

- Only 13% of organizations have a formal plan for responding to deepfake attacks.

The accuracy of human detection further compounds this problem. A 2025 iProov4 study found that average human accuracy for identifying high-quality deepfake videos was around 24.5%. This low baseline makes security awareness training built on “spot the fake” an unreliable control.

| Metric | Figure | Source |

|---|---|---|

| Deepfake fraud surge (3-year) | 2,137% increase | Keepnet Labs, 2026 |

| US deepfake losses (H1 2025) | $547 million | Zero Threat, 2026 |

| Organizations hit by deepfakes | 62% in 2025 | Gartner |

| FBI ATO complaints (2025) | 5,100+ / $262M+ lost | FBI IC3 |

| Human deepfake detection accuracy | 24.5% for video | iProov, 2025 |

| Deepfake attack frequency | 1 every 5 minutes | Entrust, 2024 |

| Orgs facing 1+ ATO attacks | 83% (half had 5+) | Trusona, 2025 |

| Companies with anti-deepfake plans | Only 13% | Programs.com, 2025 |

| Average cost per data breach | $4.44 million | Global avg., 2025 |

Deepfakes and Synthetic Identities: The Twin Threat

Although deepfakes and synthetic identities are related, they are two distinct attack vectors. Conflating them leads to incomplete defenses.

Deepfakes impersonate real employees, typically through helpdesk fraud and account takeover, where a convincing voice or video is enough to defeat knowledge-based verification. The technology is now accessible enough that deepfake attacks occur roughly once every five minutes globally.

Synthetic identities fabricate entirely new personas. Rather than impersonating someone who exists, attackers build a persona from scratch using AI-generated photos, fabricated employment histories, and constructed online profiles across LinkedIn and GitHub. The goal is typically hiring fraud or contractor infiltration, where the synthetic identity passes background checks because the data used to verify it was itself fabricated.

Microsoft tracks a North Korean operation called Jasper Sleet5 where operatives use AI-generated headshots, voice-changing software, and fabricated credentials to get hired at legitimate companies. The DOJ has dismantled networks of laptop farms spanning 16 states that made North Korean workers appear domestic. Over 300 companies across IT, healthcare, finance, and energy were infiltrated between 2020 and 2023. The initial access vector in every case was the hiring process, not a technical exploit.

Both threats converge at the same gap: organizations verify identity once at hire, then trust credentials from that point onward.

The Helpdesk is a High-Value Target

IT helpdesks are targeted because they are high-authority access control points. A successful helpdesk interaction can reset credentials, modify MFA enrollment, and restore account access. The workflows are designed for speed and usability, which means verification typically relies on knowledge-based questions and human judgment.

Knowledge-based verification has two problems in the current environment. The answers to security questions are increasingly available through LinkedIn, prior data breaches, and social media. And human judgment is not reliable against a convincing deepfake voice. Okta Threat Intelligence6 tracks a cluster designated O-UNC-034 where helpdesk interactions are used specifically to take over accounts and redirect payroll or escalate privileges. The tactic persists because the verification model it exploits has not materially changed.

Scattered Spider’s playbook works because it targets a process gap, not a technical vulnerability. The solution isn’t more security-awareness training for helpdesk agents; it is removing subjective human judgment from the verification decision entirely.

Account Takeover: The Objective Behind the Tactics

Once an attacker controls a legitimate employee’s account, they inherit that employee’s access, trust level, and permissions. Detection becomes significantly harder because the activity looks like the legitimate user.

83% of organizations reported at least one account takeover attack in 2025, with half of those reporting five or more. SIM-swapping, which directly undermines phone-based multi-factor authentication (MFA), surged over 1,000%7 in 2024. In the UK, 48% of account takeovers involved mobile phone accounts. Phone-based second factors are an increasingly unreliable control in an environment where SIM-swapping is this accessible.

Authentication that relies on credentials and phone-based MFA can be defeated through social engineering before any technical exploit is needed. To prevent this, the identity verification layer must sit upstream of authentication, at the point where credentials are issued or reset.

Where Identity Verification Closes the Gap

Identity verification addresses specific areas in the identity lifecycle where traditional IAM is most exposed: account recovery, helpdesk interactions, device enrollment, and privilege escalation. These are transitions where identity must be re-established rather than simply re-authenticated.

Instead of security questions or phone codes, organizations verify the person against a government-issued photo ID matched to a live biometric capture with built-in deepfake detection. The verification is objective, automated, and does not depend on an agent’s ability to detect a convincing impersonation.

Traditional IAM vs. Identity Verification-Enhanced IAM

| Scenario | Traditional IAM (Vulnerable) | With Identity Verification (Resilient) |

|---|---|---|

| Helpdesk reset | Agent asks security questions that are easily defeated with answers from LinkedIn and prior data breaches. | User scans photo ID + live selfie with deepfake detection; cryptographic match before credential change. |

| MFA re-enrollment | Manager approval via email/Slack can be easily spoofed; SIM-swaps intercept OTPs. | Step-up biometric verification tied to government ID; person is verified, not the device. |

| Remote onboarding | HR checks documents over video call; deepfake candidates pass with AI-generated faces. | Automated IDV matches government ID to live biometric with injection attack detection. |

| Privileged action | Relies on existing session + MFA; if hijacked via ATO, attacker is already authenticated. | Risk-based step-up triggers fresh biometric check before sensitive operations. |

| Deepfake video call | Relying on human judgment is only 24.5% accurate; 60% overestimate their ability.4 | AI-powered liveness detection analyzes micro-expressions and pixel artifacts in real time. |

| Synthetic identity at hiring | Background checks verify data that may itself be fabricated; no biometric or document authenticity layer to catch AI-generated personas. | Document authenticity analysis, biometric liveness detection, and cross-referencing against authoritative sources catch synthetic identities before they’re onboarded. |

Gartner8 projects that in 2026, 30% of enterprises will no longer consider siloed authentication solutions reliable in isolation. To defend against threats, it is critical to integrate stronger identity verification at the specific workflow points where impersonation risk is highest.

4 Best Practices for Detecting Deepfakes and Synthetic Identities

1. Hiring and Onboarding

Document authenticity checks need to go beyond OCR to catch tampered or AI-generated ID documents. Biometric matching with liveness detection stops face-swapped photos and injection attacks. Cross-referencing identity data against authoritative sources flags synthetic personas before they are onboarded.

2. Helpdesk and Account Recovery

Real-time liveness detection during selfie or video-based verification stops deepfake impersonation at the point where credentials are most vulnerable. Injection attack prevention ensures the biometric sample comes from a live camera rather than an AI-generated feed being pushed into the verification pipeline.

3. Ongoing Access

Behavioral biometrics and device telemetry provide a continuous signal layer beyond point-in-time verification. Machine learning models trained on employee-specific baselines, including typing cadence, login patterns, and device fingerprints, can flag account sharing, credential handoffs, and the kind of behavioral shift seen in Jasper Sleet where a vetted hire is quietly replaced by a different operator.

4. Across All Touchpoints

AI-powered detection models must be tested against adversarial conditions, not lab benchmarks. Real-world effectiveness of deepfake detection tools drop 45-50% outside controlled environments. Require adversarial testing results from any solution under evaluation.

Building a Resilient Workforce Identity Strategy

Most organizations verify an employee’s identity once at hire and trust credentials from that point onward. However, this is the gap attacker’s exploit.

To close the gap, a shift is required from one-time identity proofing at onboarding to continuous verification of the person behind the account. NIST SP 800-63-4, published in August 2025, formalized this by separating identity proofing assurance from authentication assurance, recognizing that strong login security is inconsequential if the underlying identity was never properly established or has since been compromised.

Making Re-Verification Practical

Full document-plus-biometric verification at every critical moment is not operationally scalable, but two approaches make continuous re-verification practical:

Verifiable credentials in digital wallets allow organizations to invest heavily in the initial identity proof, then issue a cryptographically signed credential to the employee’s digital wallet. Future re-verification validates that credential instead of repeating the full proofing process. The employee presents the credential, the system verifies the cryptographic signature, and trust is re-established in seconds.

Silent biometric enrollment registers the employee’s biometric during initial verification. Subsequent re-verification only requires a quick selfie match rather than a full document scan, making re-proofing practical at scale for helpdesk interactions, device enrollments, and privilege escalations.

Both approaches follow the same principle: proof once at high assurance, then leverage that trust anchor for lightweight re-verification throughout the employee lifecycle.

5 Best Practices for Workforce Identity Frameworks

- Separate Proofing from Authentication, Then Connect Them: Authentication validates credentials. Proofing validates the person. Align both to NIST SP 800-63-4’s IAL and AAL framework and treat them as complementary controls rather than interchangeable ones.

- Re-proof at Critical Moments: Account recovery, device enrollment, privilege escalation, and helpdesk interactions all require fresh identity proofing, not another MFA prompt. Verifiable credentials or biometric re-verification reduce the overhead of doing this consistently.

- Rely on Technology, Not Human Judgment Alone: Human accuracy for detecting high-quality deepfake videos is around 24.5%. Automated liveness detection and document verification need to replace subjective assessment at any point where a credential change can be initiated.

- Treat the Helpdesk as a Security Perimeter: Require identity proofing for all credential-altering requests. Scattered Spider’s approach fails when agents have no discretion to exercise and no subjective call to manipulate.

- Build a Reusable Trust Anchor: Whether through wallet-based credentials or enrolled biometrics, proof once at high assurance and re-verify frequently at lower cost. The initial proofing provides the foundation that all subsequent verifications inherit trust from.

Only 13% of organizations have a formal plan for responding to deepfake attacks. The ones that close that gap and make re-verification operationally practical will be better positioned as these attacks continue to scale.

Citations:

- https://www.gartner.com/en/newsroom/press-releases/2025-09-22-gartner-survey-reveals-generative-artificial-intelligence-attacks-are-on-the-rise

- https://www.fbi.gov/investigate/cyber/alerts/2025/account-takeover-fraud-via-impersonation-of-financial-institution-support

- https://zerothreat.ai/blog/deepfake-and-ai-phishing-statistics

- https://www.iproov.com/press/study-reveals-deepfake-blindspot-detect-ai-generated-content

- https://www.microsoft.com/en-us/security/blog/2025/06/30/jasper-sleet-north-korean-remote-it-workers-evolving-tactics-to-infiltrate-organizations/

- https://www.okta.com/blog/threat-intelligence/help-desks-targeted-in-social-engineering-targeting-hr-applications/

- https://www.trusona.com/blog/prevent-social-engineering-account-takeover-ciso-solution-guide

- https://www.gartner.com/en/newsroom/press-releases/2024-02-01-gartner-predicts-30-percent-of-enterprises-will-consider-identity-verification-and-authentication-solutions-unreliable-in-isolation-due-to-deepfakes-by-2026